AI Is Eating Your Data

Here’s the Encryption Tech Fighting Back

Think your data is private because you clicked some privacy checkboxes? It’s not.

Right now, tech companies hold your secrets including who you talk to, what you believe, where you’ve been, and what keeps you up at night. And they have a fiduciary obligation to their shareholders to maximize value, which often means they need to monetize that data.

Even if you uncheck “train on my data,” major LLM providers keep copies for at least 30 days1. And that’s just the beginning.

Your data isn’t private. It never was. But the technology exists which can change that. Too few know about it.

This post is adapted from my recent video and is a full transcript, lightly edited and formatted. Keep reading or watch the video:

The data == profit problem

I don’t think corporations are inherently evil. I personally run a corporation that aims to do good in the world. But the profit motive can be insidious. And when it comes to data, there’s a pervasive mentality in tech that it’s the “new oil,” which leads companies to collect more of it, analyze more of it, keep more of it, and find ways to monetize it. And by “it”, I mean OUR data.

This creates three massive problems.

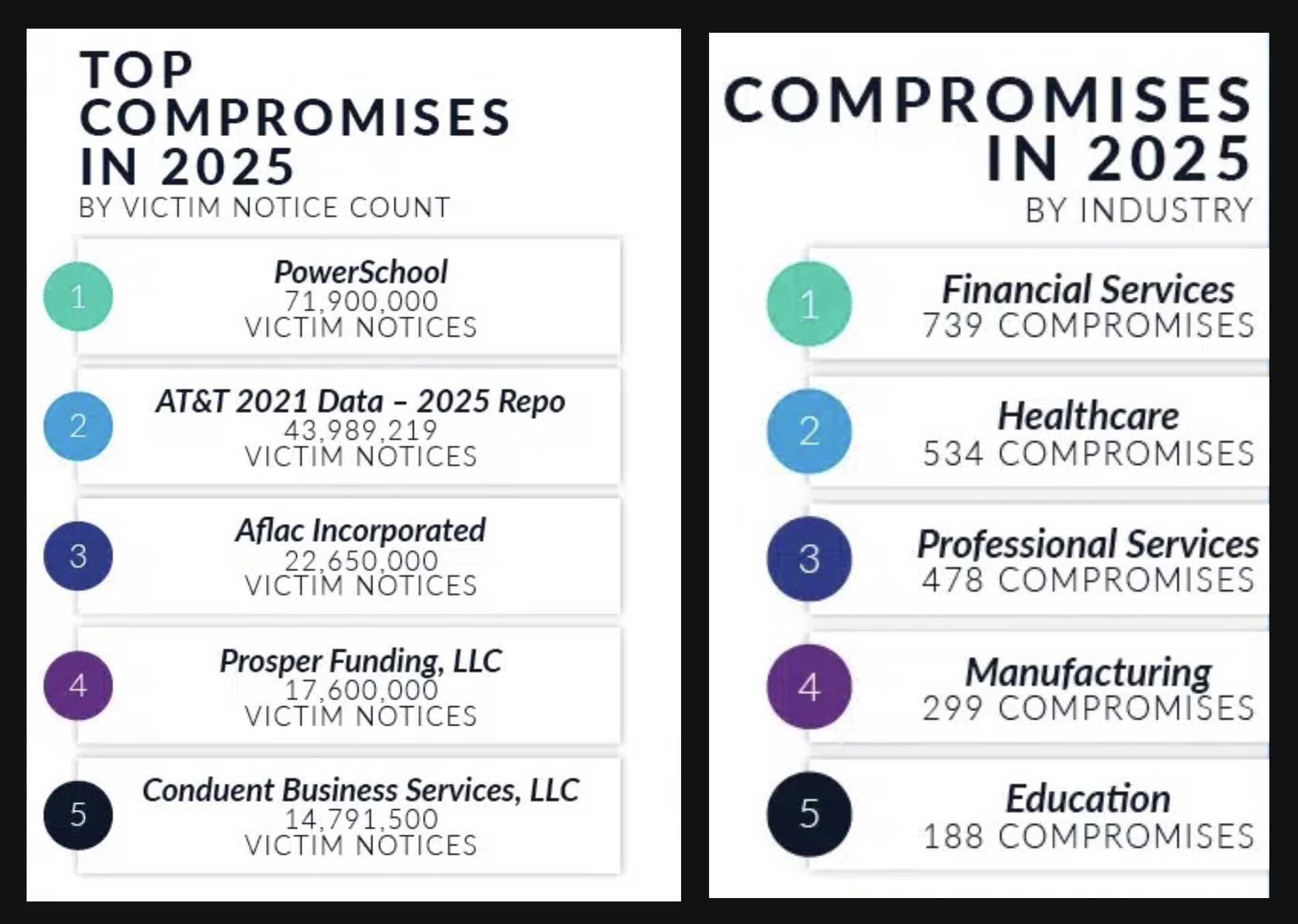

Excess data collection problem #1: security

Companies face minimal penalties when breaches happen2 so they invest just enough to clear basic regulatory requirements. Consequently, we see breaches in the news constantly. And though we weren’t the ones holding the data, it’s us who get hurt.

Excess data collection problem #2: government access

If you’re not a US citizen, American privacy laws don’t protect you. Period3. And even for citizens, when administrations pressure companies for data, or use administrative subpoenas that aren’t approved by a judge, companies comply.

Unfortunately, the Fourth Amendment doesn’t cover data you’ve “given” to a 3rd party so your rights in that scenario are effectively forfeit and you have to hope the tech company fights back on your behalf, which they might or might not do.

Excess data collection problem #3: AI (the big one)

The AI revolution runs on data. Your data. Even if you’ve opted out of training, they’re still using your information. They’re running it through models, storing it in vector databases, creating searchable indices of everything you’ve ever uploaded so they can feed it to AI4. Law firms are uploading client documents to ChatGPT. Accountants are feeding financial records to Claude. Enterprises are pouring proprietary information into AI tools at a staggering rate5. It isn’t just a problem for individuals, but ironically for businesses as well. Companies like Apple that haven’t been hoarding customer data (in a form they can read) are disadvantaged when it comes to training models, which is why Apple had to license a frontier model from Google recently.

Privacy has never been more fragile.

Solving privacy hell with PETs

There’s tech, called Privacy Enhancing Technologies (PETs), that companies can use to protect your data or your business’s data. Data can be held and used while keeping it safe even from the service provider’s own employees.

But that’s not the norm right now. And it won’t be until companies hear their customers demand it.

One of the commonly expressed reasons not to adopt data privacy tech is the need to be able to see the data and operate on it. And to find what’s relevant so an app can do its job. That’s where encrypted search comes in.

Encrypted search

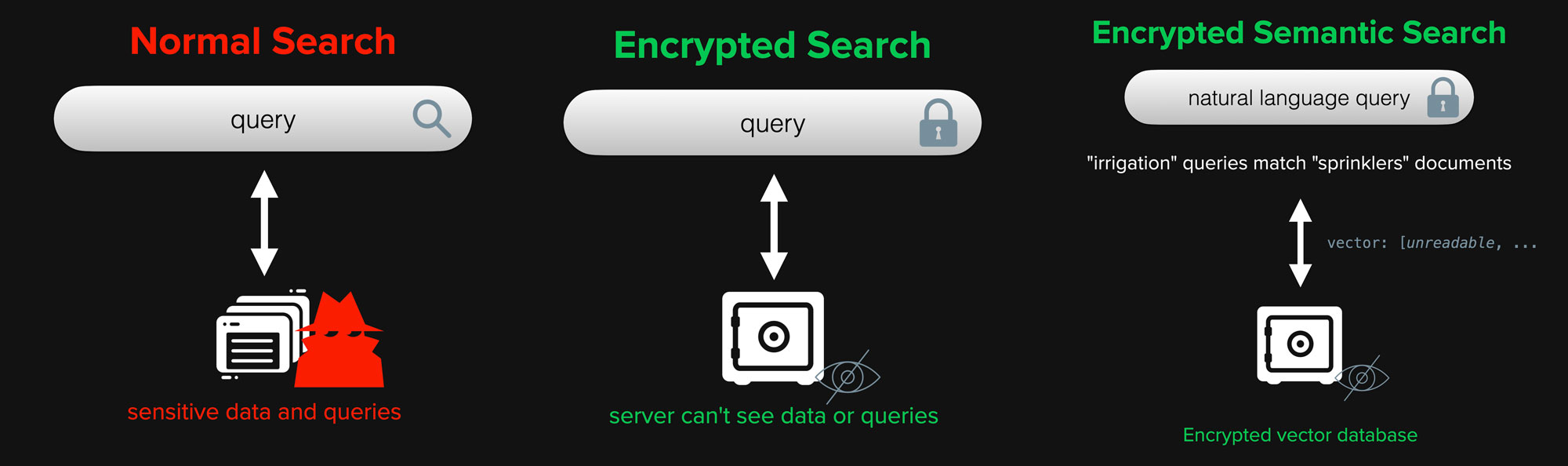

Here’s how normal search works: you type a query, the system looks through an index, finds matches, and returns results. Simple.

But that index? It contains your data in readable form. Anyone with access can see it and use it to recreate all the original documents, assuming they aren’t already sitting there alongside the index (making two vulnerable copies). Any subpoena, any curious employee, any hacker who gains access is able to read your private information.

But encrypted search flips this completely. In encrypted search, the query is encrypted, the index is encrypted, and the data (if held) is encrypted. And here’s the important part: the entity holding the index doesn’t have the decryption key. Or, in cases where the service needs limited access, the key is stored in a Hardware Security Module or KMS, the most secure data in the infrastructure.

Search still works. But only for authorized users.

Semantic search explainer

You know how in some search engines you can search for “irrigation” and get results about “sprinklers”? That’s likely semantic search. It’s search that understands meaning, not just keywords, and it works by converting your data into vectors: these long strings of numbers that capture meaning. This is the tech behind search in most AI workflows today (where natural language queries are important). And it’s used to search both text and images.

The technology exists to keep your data truly private. It’s just not the default yet.

Semantic search attacks

Now, most people assume those vectors are safe because they look like gibberish, but they’re not. Researchers have shown you can reverse vectors back into near-perfect reconstructions of the original text, complete with names, dates, and dollar amounts6.

That’s why encryption matters. Even the vectors need protection. Especially the vectors.

Researchers have shown you can reverse vectors back into near-perfect reconstructions of the original text.

Hybrid and keyword search

Increasingly, developers choose to use hybrid search, which is a combination of keyword search and vector search are used to find the data most relevant to what a user needs.

Keyword search is typically done using a classic keyword index where each keyword is in a list pointing back to the documents (and often locations in the documents) where the word occurs. These indices are also vulnerable and also typically unencrypted. Documents can be reproduced from indices and often the raw documents are already stored unencrypted alongside or within the search infrastructure.

Good news: we also have encrypted search for keyword search services with the same benefits as encrypted semantic search! Bad news: most companies today aren’t bothering to protect this data. Even Salesforce, who sells an add-on that encrypts customers’ data leaves the search indices unencrypted. Which is a rather large hole in their offered protection.

Even Salesforce, who sells an add-on that encrypts customers’ data leaves the search indices unencrypted.

Making things better

Here’s what you should look for:

- End-to-end encryption: Not just “encrypted in transit.” Not just “encrypted at rest.” Encrypted such that the service provider cannot read your data.

- Privacy-enhancing technologies sometimes called PETs

- Privacy-preserving search or encrypted search

- Zero-knowledge architectures

If you’re a business, make sure you are getting per-tenant application-layer encryption and that even search indices are encrypted.

Ask your vendors: Can you read my data? If the answer is yes, then hackers and the government can too, and that’s not good enough.

The technology exists to keep your data truly private. It’s just not the default yet. But it should be.

Read more about our vector encryption tech, Cloaked AI, our keyword encryption tech, Cloaked Search, or reach out to us and ask us how we can help you with your specific use case:

If the answer is yes, then hackers and the government can too, and that’s not good enough.

Footnotes

LLM Data Retention. OpenAI retains API data for 30 days by default for abuse monitoring. Anthropic similarly retains data for safety monitoring. Google’s Gemini API retains prompts for up to 30 days.

- OpenAI API Data Usage Policy: https://openai.com/policies/api-data-usage-policies

- Anthropic Privacy Policy: https://www.anthropic.com/privacy

- Google Gemini API Data Governance: https://docs.cloud.google.com/gemini/docs/discover/data-governance

Limited Breach Penalties. Under current US federal law, there is no comprehensive data breach penalty framework. The FTC has authority but penalties are often modest relative to company revenue. The EU’s GDPR provides for fines up to 4% of global revenue, but enforcement varies.

- FTC Data Security Enforcement: https://www.ftc.gov/business-guidance/privacy-security/data-security

- GDPR Article 83 (Penalties): https://gdpr-info.eu/art-83-gdpr/

Fourth Amendment and Non-Citizens. The Fourth Amendment’s protections against unreasonable search primarily apply to “the people,” interpreted by courts as US citizens and legal residents. Foreign nationals abroad have limited constitutional privacy protections regarding their data held by US companies.

- United States v. Verdugo-Urquidez (1990): https://supreme.justia.com/cases/federal/us/494/259/

- EFF on Foreigners and the Fourth Amendment: (link no longer available)

Data Use Beyond Training. Major AI providers distinguish between training on data and using data for inference, logging, safety monitoring, and improvement. Even opted-out data may be processed through models for response generation.

- OpenAI Enterprise Privacy: https://openai.com/enterprise-privacy

- Anthropic Usage Policy: https://www.anthropic.com/legal/aup

Enterprise AI Data Leakage. Cyberhaven research (2023) found 11% of data employees paste into ChatGPT is confidential. Samsung banned ChatGPT after engineers uploaded proprietary source code. Fishbowl survey found 68% of workers using AI tools do so without employer knowledge.

Vector Embedding Inversion. Research demonstrates that text embeddings can be inverted to recover substantial portions of original text. Semantic content recovery from common embedding models has been proven, with subsequent work improving attack accuracy.

- Morris et al. (2023) “Text Embeddings Reveal (Almost) As Much As Text”: https://arxiv.org/abs/2310.06816